Abstract

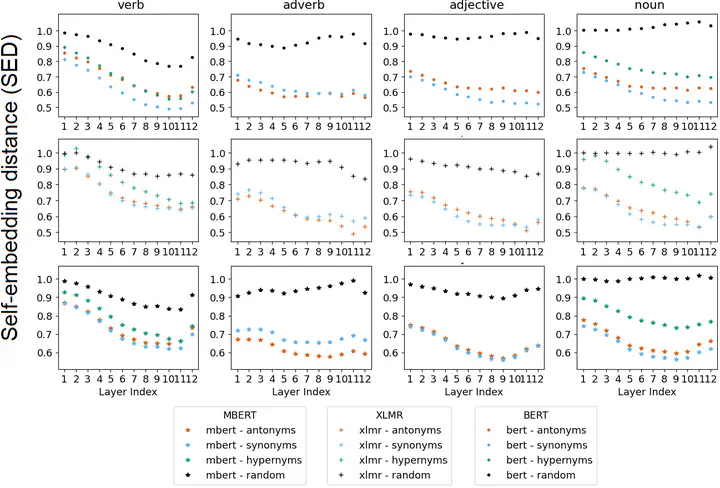

Modern language models are capable of contextualizing words based on their surrounding context. However, this capability is often compromised due to semantic change that leads to words being used in new, unexpected contexts not encountered during pre-training. In this paper, we model semantic change by studying the effect of unexpected contexts introduced by lexical replacements. We propose a replacement schema where a target word is substituted with lexical replacements of varying relatedness, thus simulating different kinds of semantic change. Furthermore, we leverage the replacement schema as a basis for a novel interpretable model for semantic change. We are also the first to evaluate the use of LLaMa for semantic change detection.

Type

Publication

Analyzing Semantic Change through Lexical Replacements